Game Dev Testing & QA: From 'It Compiles' to 'It Ships'

Build succeeded is the most dangerous lie in game development. Here's how to add automated testing, performance gates, asset validation, and cert pre-checks to the Jenkins pipeline you built in Parts 1-3.

This is Part 4 of the “Build Your Game Dev Pipeline” series. Part 1: Task Management → Part 2: Perforce + Jenkins Triggers → Part 3: Build Configuration → Part 4: Testing & QA → Part 5: Deployment.

The Green Checkmark of Lies

The Jenkins dashboard is a wall of blue. Every build: success. The producer opens Slack: “Milestone build is ready for the publisher.”

QA opens it. Main menu runs at 12 FPS. Someone checked in uncompressed 8K textures for every UI element. The tutorial crashes two minutes in — a nullptr in a quest system that worked fine on the programmer’s machine because they had a save file that skipped the intro. And the PS5 build? Hard lock on the loading screen. A shader permutation that only manifests on AMD GPUs.

Every one of these builds compiled. Every one passed Jenkins. Every one was broken.

“Build succeeded” is the most dangerous lie in game development. It means the compiler didn’t throw an error. It says nothing about whether the game actually works. The gap between “it compiles” and “it ships” is where QA lives — and most studios try to fill that gap with manual testing alone.

Manual testing catches problems. Automated testing prevents them. This post is about building the second half of that equation into the pipeline you’ve been constructing since Part 1.

Why Game Dev Testing Is Different

If you’ve done testing in web dev, forget half of what you know. Game testing has fundamentally different constraints.

| Dimension | Web/Enterprise | Game Dev |

|---|---|---|

| Determinism | Same input → same output | Physics, AI, animation blending = non-deterministic |

| Platform matrix | 2-3 browsers | Win64, PS5, Xbox Series X, Switch, maybe mobile |

| Content as code | Assets are static files | A texture change can break performance budgets |

| Performance | “Fast enough” | 16.67ms per frame or players feel it |

| Output validation | Assert on data | “Does this look right?” is a real test case |

| Build time | Seconds to minutes | Minutes to hours |

The biggest shift: performance is correctness. A web app that’s 200ms slow is annoying. A game that drops below 30 FPS is broken. This means performance testing isn’t optional — it’s a first-class citizen alongside functional tests.

The Testing Pyramid for Games

The classic testing pyramid still applies, but with game-specific layers:

┌─────────────┐

│ Manual QA │ ← Game feel, art direction, "is it fun?"

┌┤ ├┐

┌┤│ Smoke/E2E │├┐ ← Boot test, critical paths

┌┤│├─────────────┤│├┐

┌┤││ Integration ││├┐ ← Systems interaction, asset loading

┌┤│││─────────────│││├┐

│││││ Unit Tests │││││ ← C++/C# module tests

└┴┴┴┴─────────────┴┴┴┴┘

║ Performance ║ ← Parallel dimension (runs at every level)

Here’s the uncomfortable truth: most game studios have zero automated tests. None. If you add a single boot test — does the game launch and reach the main menu? — you’re already ahead of the majority of the industry.

Start there. Seriously. A boot test that runs on every build catches more issues than you’d expect: missing assets, broken cook, initialization crashes, shader compile failures. It’s the highest-ROI test you’ll ever write.

Recommended test cadence:

| Test Type | When to Run | Target Duration |

|---|---|---|

| Unit tests | Every commit | < 5 min |

| Boot/smoke test | Every build | < 10 min |

| Integration tests | Nightly | < 30 min |

| Full smoke suite | Nightly | < 30 min |

| Performance tests | Nightly + release candidates | < 45 min |

| Full platform matrix | Release candidates only | < 2 hours |

Adding Automated Tests to Your Jenkins Pipeline

Note: These examples show the general pattern — adapt paths, flags, and API calls to your engine version and project setup. They’re starting points, not copy-paste solutions.

In Part 3, we built a Jenkins pipeline that syncs, builds, and archives. Now we add test stages. Here’s the extended pipeline:

// Extending Part 3's pipeline with test stages

pipeline {

agent { label 'build-machine' }

parameters {

string(name: 'CHANGELIST', description: 'Perforce changelist number')

choice(name: 'BUILD_TYPE', choices: ['Development', 'Shipping', 'Test', 'Debug'],

description: 'Build configuration')

choice(name: 'PLATFORM', choices: ['Win64', 'PS5', 'XboxSeriesX'],

description: 'Target platform')

}

stages {

stage('Sync') {

steps {

p4sync(

credential: 'perforce-creds',

populate: syncOnly(force: false, have: true, quiet: true),

source: depotSource('//depot/GameProject/...')

)

}

}

stage('Build') {

steps {

// ... (same as Part 3)

}

}

stage('Unit Tests') {

steps {

script {

sh """

${env.UE_ROOT}/Engine/Build/BatchFiles/RunUAT.sh RunTests \

-project="\${WORKSPACE}/GameProject.uproject" \

-testfilter="Project.Unit" \

-reportexportpath="\${WORKSPACE}/TestResults/Unit" \

-log

"""

}

}

post {

always {

junit 'TestResults/Unit/**/*.xml'

}

}

}

stage('Smoke Tests') {

when {

anyOf {

expression { params.BUILD_TYPE == 'Test' }

expression { params.BUILD_TYPE == 'Shipping' }

triggeredBy 'TimerTrigger' // Nightly builds

}

}

steps {

script {

sh """

${env.UE_ROOT}/Engine/Build/BatchFiles/RunUAT.sh RunTests \

-project="\${WORKSPACE}/GameProject.uproject" \

-testfilter="Project.Smoke" \

-reportexportpath="\${WORKSPACE}/TestResults/Smoke" \

-nullrhi \

-log

"""

}

}

post {

always {

junit 'TestResults/Smoke/**/*.xml'

}

}

}

stage('Performance Tests') {

when {

anyOf {

expression { params.BUILD_TYPE == 'Shipping' }

triggeredBy 'TimerTrigger'

}

}

steps {

script {

sh """

${env.UE_ROOT}/Engine/Build/BatchFiles/RunUAT.sh RunTests \

-project="\${WORKSPACE}/GameProject.uproject" \

-testfilter="Project.Performance" \

-reportexportpath="\${WORKSPACE}/TestResults/Perf" \

-log

"""

}

}

post {

always {

junit 'TestResults/Perf/**/*.xml'

archiveArtifacts artifacts: 'TestResults/Perf/**', allowEmptyArchive: true

}

}

}

stage('Archive') {

steps {

archiveArtifacts artifacts: 'Builds/**', fingerprint: true

}

}

}

post {

failure {

slackSend channel: '#builds',

message: "Build FAILED: CL ${params.CHANGELIST} (${params.PLATFORM} ${params.BUILD_TYPE})"

}

success {

slackSend channel: '#builds',

message: "Build + Tests PASSED: CL ${params.CHANGELIST} (${params.PLATFORM} ${params.BUILD_TYPE})"

}

}

}

Notice the when blocks. Unit tests run on every build. Smoke tests only run for Test/Shipping builds or nightly triggers. Performance tests only run for Shipping builds or nightlies. This keeps commit builds fast while still catching regressions before they reach QA.

Unreal Engine: Gauntlet Tests

Unreal’s Gauntlet framework is the standard for automated functional testing. It launches the game, runs test scripts, and reports results. Here’s how to invoke it:

#!/bin/bash

# run_gauntlet_tests.sh — Boot test via Unreal Gauntlet

UE_ROOT="/opt/UnrealEngine"

PROJECT_PATH="${WORKSPACE}/GameProject.uproject"

PLATFORM="${1:-Win64}"

TEST_NAME="${2:-BootTest}"

RESULTS_DIR="${WORKSPACE}/TestResults/Gauntlet"

mkdir -p "${RESULTS_DIR}"

# RunGauntlet = launch game + run Gauntlet test scripts (full boot/functional tests)

# RunTests = run automation framework tests (unit/smoke/perf, used in Jenkins stages above)

"${UE_ROOT}/Engine/Build/BatchFiles/RunUAT.sh" \

RunGauntlet \

-project="${PROJECT_PATH}" \

-build=local \

-run \

-test="${TEST_NAME}" \

-platform="${PLATFORM}" \

-configuration=Test \

-nullrhi \

-unattended \

-nosplash \

-nosound \

-ReportExportPath="${RESULTS_DIR}" \

-log="${RESULTS_DIR}/${TEST_NAME}.log" \

-timeout=600

EXIT_CODE=$?

# Parse results for Jenkins

if [ -f "${RESULTS_DIR}/${TEST_NAME}_Summary.json" ]; then

echo "Test results exported to ${RESULTS_DIR}"

else

echo "WARNING: No test summary found"

fi

exit ${EXIT_CODE}

The flags that matter: -nullrhi runs without a GPU (for headless CI machines), -unattended prevents dialog boxes, -timeout=600 kills stuck tests after 10 minutes. Remove -nullrhi if your tests need to validate rendering.

Unity: Test Runner CLI

Unity’s Test Framework runs from the command line. Here’s the invocation plus a sample PlayMode test:

#!/bin/bash

# run_unity_tests.sh — Unity Test Runner CLI

UNITY_PATH="/opt/Unity/Editor/Unity"

PROJECT_PATH="${WORKSPACE}/GameProject"

RESULTS_DIR="${WORKSPACE}/TestResults/Unity"

mkdir -p "${RESULTS_DIR}"

# Run EditMode tests (fast, no game launch)

"${UNITY_PATH}" -batchmode -nographics \

-projectPath "${PROJECT_PATH}" \

-runTests \

-testPlatform EditMode \

-testResults "${RESULTS_DIR}/editmode-results.xml" \

-logFile "${RESULTS_DIR}/editmode.log"

# Run PlayMode tests (launches game, slower)

"${UNITY_PATH}" -batchmode -nographics \

-projectPath "${PROJECT_PATH}" \

-runTests \

-testPlatform PlayMode \

-testResults "${RESULTS_DIR}/playmode-results.xml" \

-logFile "${RESULTS_DIR}/playmode.log"

// BootTest.cs — Unity PlayMode test that verifies the game launches

using System.Collections;

using NUnit.Framework;

using UnityEngine;

using UnityEngine.TestTools;

using UnityEngine.SceneManagement;

public class BootTest

{

[UnityTest]

public IEnumerator GameBootsToMainMenu()

{

// Load the boot scene

SceneManager.LoadScene("Boot", LoadSceneMode.Single);

yield return new WaitForSeconds(5f);

// Verify we reached the main menu

var mainMenu = GameObject.Find("MainMenuCanvas");

Assert.IsNotNull(mainMenu, "Main menu did not appear after boot");

}

[UnityTest]

public IEnumerator AllScenesLoadWithoutErrors()

{

var sceneCount = SceneManager.sceneCountInBuildSettings;

for (int i = 0; i < sceneCount; i++)

{

var scenePath = SceneUtility.GetScenePathByBuildIndex(i);

SceneManager.LoadScene(i, LoadSceneMode.Single);

yield return new WaitForSeconds(2f);

// Verify the correct scene actually loaded (not just any scene)

Assert.AreEqual(scenePath, SceneManager.GetActiveScene().path,

$"Expected scene {scenePath} but got {SceneManager.GetActiveScene().path}");

}

}

}

The boot test is your single highest-value automated test. Write this one first, run it on every build, and you’ll catch the majority of “the game doesn’t even launch” issues before QA wastes time on them.

Performance Budgets and Gates

A build that runs at 15 FPS is not a successful build. Performance budgets make this explicit: define hard limits, fail the build if they’re exceeded.

Define Your Budgets

| Metric | PC (Recommended) | PS5 | Switch |

|---|---|---|---|

| Frame time | < 16.67ms (60 FPS) | < 16.67ms (60 FPS) | < 33.33ms (30 FPS) |

| Memory | < 8 GB | < 12 GB (of 16 GB) | < 3.5 GB (of 4 GB) |

| Load time (initial) | < 15s | < 10s (SSD) | < 30s |

| Draw calls | < 3,000/frame | < 2,500/frame | < 1,500/frame |

| Texture memory | < 4 GB | < 6 GB | < 1.5 GB |

| Disk size (build) | < 80 GB | < 80 GB | < 16 GB |

These are starting points. Adjust for your game’s genre and art style. An open-world RPG has different budgets than a puzzle game.

Performance Gate in Jenkins

stage('Performance Gate') {

when {

anyOf {

expression { params.BUILD_TYPE == 'Shipping' }

triggeredBy 'TimerTrigger'

}

}

steps {

script {

// Run performance benchmark

sh """

${env.UE_ROOT}/Engine/Build/BatchFiles/RunUAT.sh RunTests \

-project="\${WORKSPACE}/GameProject.uproject" \

-testfilter="Project.Performance.Benchmark" \

-nullrhi=false \

-gpu \

-reportexportpath="\${WORKSPACE}/TestResults/Perf" \

-log

"""

// Parse results and enforce budgets

// This file must be written by your test harness (see C++ example below)

def perfResults = readJSON file: 'TestResults/Perf/perf_summary.json'

def failures = []

if (perfResults.avg_frame_time_ms > 16.67) {

failures.add("Frame time ${perfResults.avg_frame_time_ms}ms exceeds 16.67ms budget")

}

if (perfResults.peak_memory_mb > 8192) {

failures.add("Memory ${perfResults.peak_memory_mb}MB exceeds 8GB budget")

}

if (perfResults.avg_draw_calls > 3000) {

failures.add("Draw calls ${perfResults.avg_draw_calls} exceeds 3000 budget")

}

if (failures.size() > 0) {

error("Performance budget exceeded:\n${failures.join('\n')}")

}

echo "Performance gate PASSED: ${perfResults.avg_frame_time_ms}ms frame time, " +

"${perfResults.peak_memory_mb}MB memory, ${perfResults.avg_draw_calls} draw calls"

}

}

post {

always {

// Archive for trend tracking

archiveArtifacts artifacts: 'TestResults/Perf/**', allowEmptyArchive: true

}

}

}

Unreal Engine: Stat Capture Automation

// Pseudocode — adapt to your engine version and stat capture method

//

// PerfBenchmarkTest.cpp — UE5 automated performance capture (illustrative)

#include "Misc/AutomationTest.h"

#include "Engine/World.h"

#include "HAL/PlatformMemory.h"

IMPLEMENT_SIMPLE_AUTOMATION_TEST(FPerfBenchmarkTest,

"Project.Performance.Benchmark",

EAutomationTestFlags::ClientContext | EAutomationTestFlags::StressFilter)

bool FPerfBenchmarkTest::RunTest(const FString& Parameters)

{

// Load the benchmark map

UWorld* World = AutomationOpenMap(TEXT("/Game/Maps/PerfBenchmark"));

TestNotNull(TEXT("Benchmark map loaded"), World);

// --- What to capture ---

// 1. Frame times: use FEngineLoop tick or stat unit, NOT FPlatformProcess::Sleep

// UE5's FAutomationTestBase provides AddCommand() for latent operations

// that tick the engine properly between frames

// 2. Memory: FPlatformMemory::GetStats() for physical memory usage

// 3. Draw calls: GNumDrawCallsRHI (RHI stat counter)

//

// General shape:

// - Warm up for ~60 frames (latent commands that tick the engine)

// - Sample ~300 frames of real engine ticks

// - Collect: frame time (ms), peak memory (MB), draw call count

// - Write results to JSON for Jenkins to parse

// Calculate stats from collected samples

// float AvgFrameTime = ...; float MaxFrameTime = ...; int32 AvgDrawCalls = ...;

// Memory stats

FPlatformMemoryStats MemStats = FPlatformMemory::GetStats();

float UsedMemoryMB = MemStats.UsedPhysical / (1024.0f * 1024.0f);

// Log results (parsed by Jenkins)

UE_LOG(LogTemp, Display, TEXT("PERF_RESULT: avg_frame_time_ms=%.2f"), AvgFrameTime);

UE_LOG(LogTemp, Display, TEXT("PERF_RESULT: max_frame_time_ms=%.2f"), MaxFrameTime);

UE_LOG(LogTemp, Display, TEXT("PERF_RESULT: avg_draw_calls=%d"), AvgDrawCalls);

UE_LOG(LogTemp, Display, TEXT("PERF_RESULT: memory_mb=%.0f"), UsedMemoryMB);

// Assert budgets

TestTrue(TEXT("Average frame time within budget"), AvgFrameTime < 16.67f);

TestTrue(TEXT("No frame spikes above 33ms"), MaxFrameTime < 33.33f);

TestTrue(TEXT("Draw calls within budget"), AvgDrawCalls < 3000);

TestTrue(TEXT("Memory within budget"), UsedMemoryMB < 8192.0f);

// Write results JSON for Jenkins performance gate

// (your test harness must output this file for the gate to read)

return true;

}

The exact API depends on your engine version. UE5’s FAutomationTestBase provides AddCommand() for latent operations that tick the engine properly. The pattern above shows the shape of a perf test — capture warmup, sample, calculate, assert.

Unity: Performance Testing Framework

// PerformanceBenchmark.cs — Unity Performance Testing Framework

using System.Collections;

using NUnit.Framework;

using Unity.PerformanceTesting;

using UnityEngine;

using UnityEngine.TestTools;

using UnityEngine.SceneManagement;

using UnityEngine.Profiling;

public class PerformanceBenchmark

{

[UnityTest, Performance]

public IEnumerator MainMenuFrameTime()

{

SceneManager.LoadScene("MainMenu", LoadSceneMode.Single);

yield return new WaitForSeconds(3f); // Let scene settle

// Measure frame time over 300 frames

using (Measure.Frames()

.WarmupCount(60)

.MeasurementCount(300)

.Scope())

{

yield return Measure.Frames().Run();

}

// Budget: 60 FPS = 16.67ms

// The Performance Testing Framework records SampleGroups

// that can be asserted against in the test results

}

[UnityTest, Performance]

public IEnumerator GameplayMemoryBudget()

{

SceneManager.LoadScene("GameplayLevel01", LoadSceneMode.Single);

yield return new WaitForSeconds(10f);

long totalMemory = Profiler.GetTotalAllocatedMemoryLong();

float memoryMB = totalMemory / (1024f * 1024f);

Measure.Custom(new SampleGroup("TotalMemoryMB"), memoryMB);

// Assert memory budget

Assert.Less(memoryMB, 8192f,

$"Memory usage {memoryMB:F0}MB exceeds 8GB budget");

yield return null;

}

[UnityTest, Performance]

public IEnumerator LoadTimeWithinBudget()

{

float startTime = Time.realtimeSinceStartup;

SceneManager.LoadScene("GameplayLevel01", LoadSceneMode.Single);

yield return new WaitUntil(() =>

SceneManager.GetActiveScene().name == "GameplayLevel01" &&

SceneManager.GetActiveScene().isLoaded);

float loadTime = Time.realtimeSinceStartup - startTime;

Measure.Custom(new SampleGroup("LoadTimeSeconds"), loadTime);

Assert.Less(loadTime, 15f,

$"Load time {loadTime:F1}s exceeds 15s budget");

}

}

Track trends, not just pass/fail. A frame time of 15ms today is fine. But if it was 10ms last week and 12ms the week before, something is trending the wrong direction. Archive your performance results (the Jenkins pipeline above does this) and chart them over time. A simple spreadsheet works. Grafana is better.

Asset-level budgets matter too. A single 4K uncompressed texture shouldn’t blow your memory budget. We’ll cover this in the next section.

Asset Quality Gates

Assets cause as many build problems as code. An artist checks in a 200MB uncompressed TGA, and suddenly the build is 2GB larger and load times doubled. Automated validation catches this before it reaches the build.

Automated Content Validation

#!/usr/bin/env python3

"""

asset_validator.py — Validate game assets against project standards.

Run as a pre-commit check or Jenkins stage.

"""

import os

import sys

import json

from pathlib import Path

from dataclasses import dataclass, field

from typing import List

@dataclass

class ValidationResult:

path: str

rule: str

severity: str # "error" or "warning"

message: str

@dataclass

class AssetValidator:

project_root: Path

results: List[ValidationResult] = field(default_factory=list)

# --- Rules (adjust per project) ---

TEXTURE_RULES = {

"max_dimension": 4096,

"must_be_power_of_two": True,

"allowed_formats": [".png", ".tga", ".exr"],

"max_file_size_mb": 50,

}

MESH_RULES = {

"max_triangles": {

"character": 100_000,

"prop": 25_000,

"environment": 50_000,

"weapon": 30_000,

},

"require_lods": True,

"min_lod_count": 2,

}

AUDIO_RULES = {

"allowed_formats": [".wav", ".ogg"],

"max_sample_rate": 48000,

"max_file_size_mb": 100,

}

NAMING_RULES = {

"textures": r"^T_[A-Z][a-zA-Z0-9]+_(D|N|R|M|E|AO)$", # T_AssetName_D (diffuse)

"meshes": r"^SM_[A-Z][a-zA-Z0-9]+$", # SM_AssetName

"materials": r"^M_[A-Z][a-zA-Z0-9]+$", # M_AssetName

"blueprints": r"^BP_[A-Z][a-zA-Z0-9]+$", # BP_AssetName

}

def validate_texture(self, filepath: Path):

"""Check texture dimensions, format, and file size."""

ext = filepath.suffix.lower()

if ext not in self.TEXTURE_RULES["allowed_formats"]:

self.results.append(ValidationResult(

str(filepath), "texture_format", "error",

f"Invalid format {ext}. Allowed: {self.TEXTURE_RULES['allowed_formats']}"

))

size_mb = filepath.stat().st_size / (1024 * 1024)

if size_mb > self.TEXTURE_RULES["max_file_size_mb"]:

self.results.append(ValidationResult(

str(filepath), "texture_size", "error",

f"Texture {size_mb:.1f}MB exceeds {self.TEXTURE_RULES['max_file_size_mb']}MB limit"

))

# Dimension checks require PIL/Pillow

try:

from PIL import Image

with Image.open(filepath) as img:

w, h = img.size

max_dim = self.TEXTURE_RULES["max_dimension"]

if w > max_dim or h > max_dim:

self.results.append(ValidationResult(

str(filepath), "texture_dimension", "error",

f"Texture {w}x{h} exceeds {max_dim}x{max_dim} limit"

))

if self.TEXTURE_RULES["must_be_power_of_two"]:

if (w & (w - 1)) != 0 or (h & (h - 1)) != 0:

self.results.append(ValidationResult(

str(filepath), "texture_pot", "warning",

f"Texture {w}x{h} is not power-of-two"

))

except ImportError:

pass # Skip dimension check if PIL not available

def validate_mesh(self, filepath: Path):

"""Check mesh file size. Poly count checks require trimesh or pyfbx."""

size_mb = filepath.stat().st_size / (1024 * 1024)

max_size_mb = 100 # Adjust per project

if size_mb > max_size_mb:

self.results.append(ValidationResult(

str(filepath), "mesh_size", "error",

f"Mesh {size_mb:.1f}MB exceeds {max_size_mb}MB limit"

))

# TODO: For poly count validation, use trimesh or engine-specific tools:

# import trimesh

# mesh = trimesh.load(filepath)

# if len(mesh.faces) > max_triangles: ...

def validate_naming(self, filepath: Path):

"""Check asset naming conventions."""

import re

name = filepath.stem

ext = filepath.suffix.lower()

pattern = None

if ext in [".png", ".tga", ".exr"]:

pattern = self.NAMING_RULES.get("textures")

elif ext in [".fbx", ".obj"]:

pattern = self.NAMING_RULES.get("meshes")

if pattern and not re.match(pattern, name):

self.results.append(ValidationResult(

str(filepath), "naming_convention", "warning",

f"'{name}' doesn't match naming convention: {pattern}"

))

def validate_directory(self, directory: Path, extensions: list):

"""Validate all matching assets in a directory."""

self.total_scanned = 0

for ext in extensions:

for filepath in directory.rglob(f"*{ext}"):

self.total_scanned += 1

self.validate_naming(filepath)

if ext in [".png", ".tga", ".exr"]:

self.validate_texture(filepath)

elif ext in [".fbx", ".obj"]:

self.validate_mesh(filepath)

def run(self):

"""Run all validations and return exit code."""

content_dir = self.project_root / "Content"

if content_dir.exists():

self.validate_directory(content_dir,

[".png", ".tga", ".exr", ".fbx", ".obj", ".wav", ".ogg"])

# Report

errors = [r for r in self.results if r.severity == "error"]

warnings = [r for r in self.results if r.severity == "warning"]

print(f"\n{'='*60}")

print(f"Asset Validation: {len(errors)} errors, {len(warnings)} warnings")

print(f"{'='*60}")

for r in self.results:

icon = "ERROR" if r.severity == "error" else "WARN"

print(f" [{icon}] {r.path}")

print(f" {r.message}")

# Export JSON for Jenkins

report = {

"total_scanned": getattr(self, 'total_scanned', 0),

"assets_with_findings": len(set(r.path for r in self.results)),

"errors": len(errors),

"warnings": len(warnings),

"details": [vars(r) for r in self.results],

}

with open("asset_validation_report.json", "w") as f:

json.dump(report, f, indent=2)

return 1 if errors else 0

if __name__ == "__main__":

project_root = Path(sys.argv[1]) if len(sys.argv) > 1 else Path(".")

validator = AssetValidator(project_root=project_root)

sys.exit(validator.run())

Two-Stage Validation: Automated + Human

Not everything can be automated. “Does this character model look right?” is a human question. The pattern:

- Automated checks run first. Dimensions, file size, naming conventions. For meshes: file size limits (poly counts require engine-specific tools or libraries like trimesh). Instant, on every commit.

- Human review runs second. Art direction, visual quality, animation feel. Only for assets that pass automated checks.

This is where commit tags from Part 1 come back. Use tags like #approval or #review in your commit messages to trigger human review workflows. A Perforce trigger (from Part 2) can parse these tags and route to the right reviewer.

The automated checks save human reviewers from wasting time on obviously broken assets. If a texture is 16K and non-power-of-two, there’s no point asking the art director to review it.

Platform Certification Pre-Checks

Console certification — Sony TRC, Microsoft XR, Nintendo Lotcheck — is the final boss of shipping a game. Fail cert, and you’re looking at weeks of delays. The key insight: treat cert requirements as test cases from day one.

| Requirement Category | Sony (TRC) | Microsoft (XR) | Nintendo (Lotcheck) | Automatable? |

|---|---|---|---|---|

| Controller disconnect handling | ✓ | ✓ | ✓ | Yes |

| Save data corruption recovery | ✓ | ✓ | ✓ | Yes |

| Language/locale support | ✓ | ✓ | ✓ | Partially |

| Achievement/trophy integration | ✓ | ✓ | ✓ | Yes |

| Network disconnect handling | ✓ | ✓ | ✓ | Yes |

| Framerate requirements | ✓ | ✓ | ✓ | Yes |

| Age rating display | ✓ | ✓ | ✓ | Yes |

| Terms of service display | ✓ | ✓ | ✓ | Yes |

| User privilege checks | ✓ | ✓ | — | Yes |

| Suspend/resume handling | ✓ | ✓ | ✓ | Partially |

| Content during loading | — | ✓ | — | Partially |

Most of these can be automated. Controller disconnect? Simulate it programmatically. Save corruption? Write garbage to a save file and verify the game recovers. These tests should run on every Shipping build.

stage('Cert Pre-Check') {

when {

expression { params.BUILD_TYPE == 'Shipping' }

}

steps {

script {

echo "Running platform certification pre-checks for ${params.PLATFORM}..."

// Run cert-specific test suite

sh """

${env.UE_ROOT}/Engine/Build/BatchFiles/RunUAT.sh RunTests \

-project="\${WORKSPACE}/GameProject.uproject" \

-testfilter="Project.Certification.${params.PLATFORM}" \

-platform=${params.PLATFORM} \

-configuration=Shipping \

-reportexportpath="\${WORKSPACE}/TestResults/Cert" \

-log

"""

// Platform-specific checks

if (params.PLATFORM == 'PS5') {

sh """

# Validate TRC-specific requirements

# Set TRC_VERSION to match your current SDK requirements

python3 scripts/cert_check.py \

--platform ps5 \

--build-dir "\${WORKSPACE}/Builds/${params.PLATFORM}" \

--trc-version "\${TRC_VERSION}" \

--report "\${WORKSPACE}/TestResults/Cert/trc_report.json"

"""

} else if (params.PLATFORM == 'XboxSeriesX') {

sh """

# Validate XR-specific requirements

# Set XR_VERSION to match your current SDK requirements

python3 scripts/cert_check.py \

--platform xbox \

--build-dir "\${WORKSPACE}/Builds/${params.PLATFORM}" \

--xr-version "\${XR_VERSION}" \

--report "\${WORKSPACE}/TestResults/Cert/xr_report.json"

"""

}

}

}

post {

always {

junit 'TestResults/Cert/**/*.xml'

archiveArtifacts artifacts: 'TestResults/Cert/**', allowEmptyArchive: true

}

failure {

slackSend channel: '#cert-tracking',

message: "⚠️ Cert pre-check FAILED for ${params.PLATFORM} Shipping build (CL ${params.CHANGELIST})"

}

}

}

This stage only runs for Shipping builds — matching the build types from Part 3. There’s no point running cert checks on Development builds.

Start early. Don’t wait until you’re two weeks from submission to discover your game doesn’t handle controller disconnect. Add cert test cases to your nightly suite the moment you have a playable build.

Visual Regression Testing

Screenshot comparison catches visual bugs that functional tests miss: broken shaders, wrong texture assignments, UI layout drift, lighting changes.

The approach: capture screenshots at defined camera positions, compare against known-good reference images using perceptual diff algorithms (SSIM or perceptual hash).

The honest take: visual regression testing is hard to do well. Non-deterministic rendering (TAA, particle systems, dynamic lighting) causes false positives. Different GPUs produce slightly different output. Most sub-AAA studios should prioritize the earlier sections of this post first.

If you do pursue it:

- Unreal Engine has built-in screenshot comparison in its automation framework (

FAutomationTestScreenshotCompare) - ImageMagick can compute perceptual diffs between screenshots (

compare -metric SSIM) - Set generous thresholds (95% similarity rather than 99%) to avoid false positives

- Only compare in deterministic scenarios: fixed camera, no particles, no TAA, static lighting

- Use it for UI screens first (menus, HUD, inventory) — these are the most deterministic

Visual regression is a “nice to have.” The boot test, performance gates, and cert pre-checks are “need to have.”

How ButterStack Helps

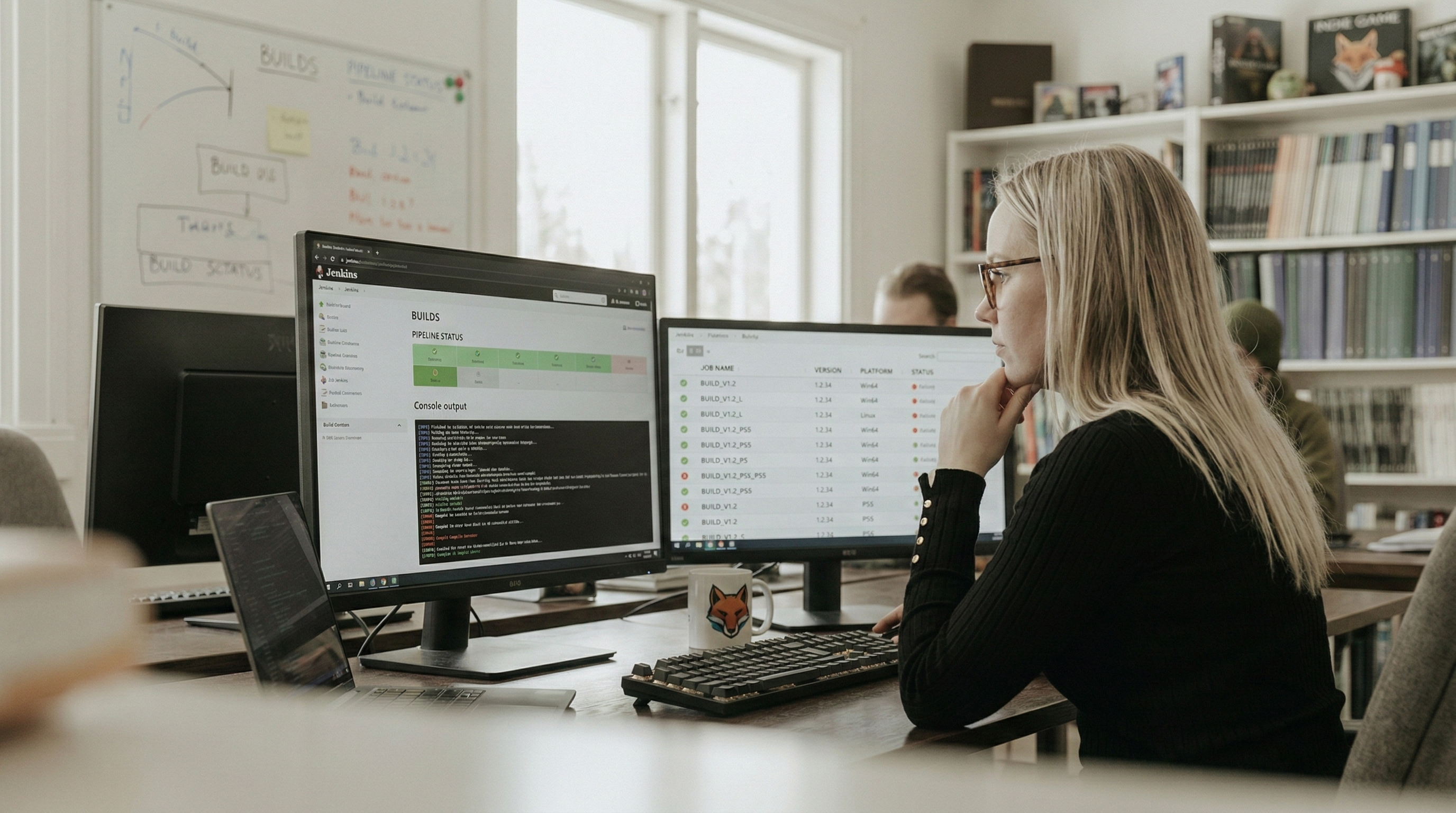

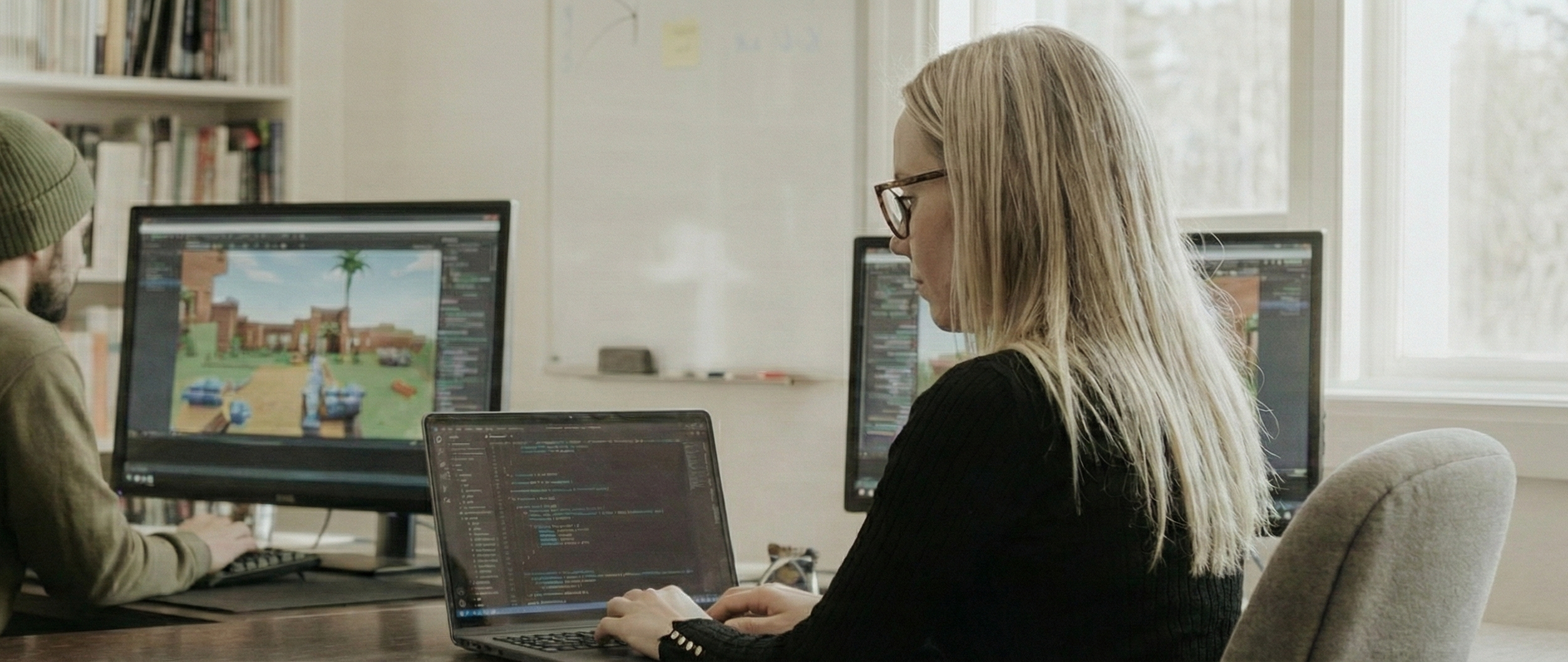

ButterStack doesn’t replace your test framework — it gives you visibility into what’s happening across your entire build-test-deploy pipeline. Here’s how ButterStack fits into this picture:

AI Build Failure Analysis

When a build fails, ButterStack’s AI analyzes the log output and categorizes the failure:

compile_error— Code compilation failureslinker_error— Linking failures (missing symbols, library issues)asset_cook_failure— Asset cooking/processing failuresshader_error— Shader compilation failurestest_failure— Automated test failurestimeout— Build exceeded time limitsoom— Out of memory during builddependency_issue— Missing or incompatible dependenciesconfig_error— Configuration/setup issues

Each failure is rated by severity (critical, high, medium, low), attributed to the developer whose commit likely caused it, and matched against known patterns. Over time, this builds a knowledge base: “shader errors in this project are usually caused by missing permutation defines” becomes a suggestion the next time a similar error appears.

3-Step Build Pipeline

Every build in ButterStack flows through three stages:

- External Build — Your Jenkins/CI build runs and reports status back

- Asset tracking — Which assets were included, approval status, and cook-time data from engine logs

- Approval — Human approval gate with

passed_pending_approvalstatus

A build can compile successfully but still sit in passed_pending_approval until a lead reviews the asset changes. This prevents untested or unreviewed content from reaching QA.

Asset Approval Workflows

Individual assets have their own approval lifecycle:

pending— Awaiting reviewapproved— Cleared by a reviewerdenied— Rejected with feedbackignored— Explicitly skipped (e.g., placeholder assets)

Approval types include manual, automated, review, and quality_check. ButterStack tracks approval duration — how long assets sit in review — so you can identify bottlenecks in your content pipeline.

Asset Lineage

When QA finds a bug, the first question is “who changed this?” ButterStack traces from commit to asset to build. Assets are linked to the changelists and commits that modified them and the build runs that included them. When your task tracker is integrated, this chain extends back to the originating task.

This is the traceability chain from Part 1 made concrete: Task → Commit → Build → Asset → Approval.

Game Engine Build Observation

For Unreal and Unity builds, ButterStack tracks engine-specific metrics:

- Per-asset cook tracking (which assets take longest to cook)

- DDC (Derived Data Cache) hit rates (referencing the shared DDC from Part 3)

- Shader permutation counts

- Compression ratios (source vs. cooked size)

- Build phase tracking: initializing → cooking → compiling shaders → packaging → archiving

This turns “the build took 3 hours” into “the build took 3 hours because shader compilation took 90 minutes and 47 assets had zero DDC hits.”

Coming soon: Asset optimization recommendations, lineage visualization, and real-time activity tracking are in development.

Get started with ButterStack →

FAQ

We have zero automated tests. Where do we start?

The boot test. Write one test that launches your game and waits for the main menu to appear. Run it on every build. This single test catches missing assets, broken initialization, shader compile failures, and crash-on-launch bugs. You can write it in an afternoon. Everything else builds on this foundation.

How do you test multiplayer/networked gameplay?

Automated multiplayer testing requires launching multiple game instances and coordinating between them. Unreal’s Gauntlet supports multi-process tests. Unity requires custom harnesses. Start with connection tests (can two clients connect and see each other?), then add gameplay tests (can they interact?). Dedicated server builds make this easier since you can run headless server instances on CI machines.

Our tests are flaky. They pass sometimes and fail other times.

Quarantine flaky tests — move them to a separate suite that runs but doesn’t fail the build. Never ignore them. Investigate and fix the root cause. Common culprits: timing-dependent assertions (use WaitForCondition instead of WaitForSeconds), shared state between tests, non-deterministic physics, and file system race conditions. A flaky test that you ignore will hide a real failure eventually.

Should QA write automated tests?

QA should define test cases. Whether they write the automation depends on their skillset. Some QA engineers write excellent automation. Others are better at exploratory testing and edge-case discovery. The best setup: QA defines what to test, engineers automate the repetitive cases, QA focuses their manual time on what can’t be automated — game feel, art review, edge cases.

How long should test suites take?

| Suite | Budget | Rationale |

|---|---|---|

| Unit tests | < 5 min | Must run on every commit without blocking developers |

| Smoke/boot | < 10 min | Runs on every build; fast enough to not delay feedback |

| Integration | < 30 min | Nightly; can afford more time |

| Full suite | < 2 hours | Release candidates; thoroughness over speed |

If your unit tests take more than 5 minutes, split them. If your full suite takes more than 2 hours, parallelize across multiple machines.

Is manual QA still needed?

Absolutely. Automated tests answer “does it work?” Manual QA answers “is it good?” Game feel, art direction, difficulty balance, user experience — these are human judgments. Automation frees QA from repetitive regression testing so they can focus on the subjective quality questions that only humans can answer. The best QA teams spend 80% of their time on exploration and 20% on regression.

How do we handle test data and save files?

Generate test data deterministically. Don’t rely on save files from someone’s dev machine — they’ll be out of date within a week. Write test fixtures that create known game states: a save at level 5 with specific inventory, a profile with specific settings. Store these as test assets in your repository. Regenerate them as part of your test setup.

What’s Next

Your builds now compile, get tested, pass performance gates, and survive cert pre-checks. But a tested build sitting on a Jenkins server isn’t shipping. In Part 5, we’ll cover deployment: Steam uploads, console submission workflows, staging environments, and rollback strategies. The last mile from “build approved” to “players can download it.”

Conclusion

The traceability chain from Part 1 was:

Task → Commit → Build → Test → Deploy

We’ve spent this post filling in the Test link. Without it, your chain is:

Task → Commit → Build → ??? → Hope for the best

Here’s what to do next, in order:

- Write a boot test. Today. One test that launches your game and verifies the main menu appears. This alone is transformative.

- Add it to Jenkins. Use the pipeline stages from this post. Run it on every build.

- Define performance budgets. Write down your frame time, memory, and load time targets. Even if you don’t automate the checks yet, having the numbers written down changes how your team thinks about performance.

- Automate asset validation. Start with texture sizes and naming conventions. Low effort, high payoff.

- Add cert checks to Shipping builds. Don’t wait until submission month.

Every bug your QA team finds should become a test case. That crash in the tutorial? Write a test that plays through the tutorial. That performance regression from the uncompressed textures? Add a texture size check. Over time, your automated suite becomes a living record of every mistake you’ve caught — and a guarantee you’ll never ship that mistake again.

Start small. Build up. A single boot test is infinitely better than no tests at all.

Thanks!

Ryan L’Italien

Founder and CEO of ButterStack

Want to see what pipeline observability looks like? Try ButterStack free and connect your first integration in minutes.

Or just email me at: ryan@butterstack.com.